Making science better — 5 years in

We will not back down, but keep being adamant supporters of Open Access until all of the world’s scientific knowledge, especially the tax-funded kind, is free and openly available to everyone.

Five years ago, we set out to change the world through science. People told us we were bold and a bit insane; we were up against a daunting technical challenge – scientific text understanding – and a powerful publishing industry that does not welcome newcomers. Critics claimed that the world of Open Access was not powerful enough for literature reviews, and that it would never change. From the impact factor to the citation system and the urgent need to ‘publish or perish’, the challenges of the research world were vast. Where would we even start?

Open Access movement

We kept it simple. We started with our favourite people, the academic researchers, and one of their most general frustrations: the literature reviews. In beginning, we connected to the Directory of Open Access Journals, which had about 2 million Open Access (OA) articles. It wasn’t much, but it was something.

As our products matured and improved, and the company and number of users grew, more and more Open Access articles were published. Today we connect with our friends at Core.ac.uk for access to 200 million metadata entries of OA articles. We connect to PubMed for 27 million articles, both open access and paywalled. We connect to the entire US Patents Office, the CORDIS database of funded research progress, and to the paywalled content of our clients, of course.

One blocker remains: the major publishers who won’t allow us to machine-read their publicly available titles and abstracts. (For now, we’re working our way around it with paying clients.) We will not back down, but keep being adamant supporters of Open Access until all of the world’s scientific knowledge, especially the tax-funded kind, is free and openly available to everyone.

Relentless focus

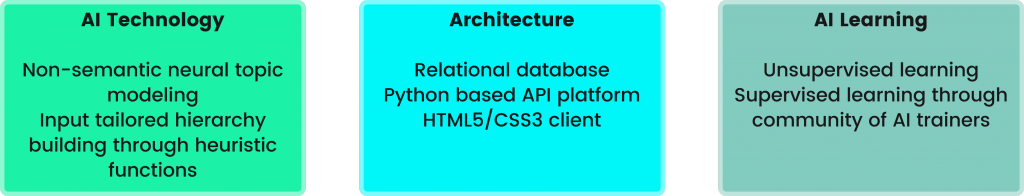

Access to scientific content aside, what we have managed to achieve in the last five years is exciting! We’ve implemented and are developing world-leading solutions on document similarity and word embeddings. Our core engine is among the world’s most sophisticated when it comes to scientific text understanding. And, what I am perhaps more proud of than anything; we’ve remained focused. Countless times have others asked for tools for other kinds of material – from legal texts to Twitter messages. We have repeatedly stood our ground, rejected what could have been financially rewarding opportunities, and kept working on what matters to us – and, we believe, to the world.

And, most importantly, we have built a suite of tools that are helping thousands of students and researchers across the world – entirely bypassing the biased citation system – to do the most boring part of their job way more efficiently. My favorite moments five years on are when people tell me we totally saved their thesis work, or their project, or their research grant application.

The road ahead

There are still challenges remaining. There are technical hurdles as we work with domain aware embeddings. We continue to fight for access to paywalled content and the ability to machine-read their abstracts. And we face a range of problems in the world of making scientific research more accessible, navigable, and understandable.

Challenge still accepted.