P3. Document cluster summaries and labelling

RQ: What is the best way to label a cluster of documents with a sequence of words? (By “a sequence of words”, we do not specifically refer to “sentences”. It can be a list of words highly-ranked statistically.)

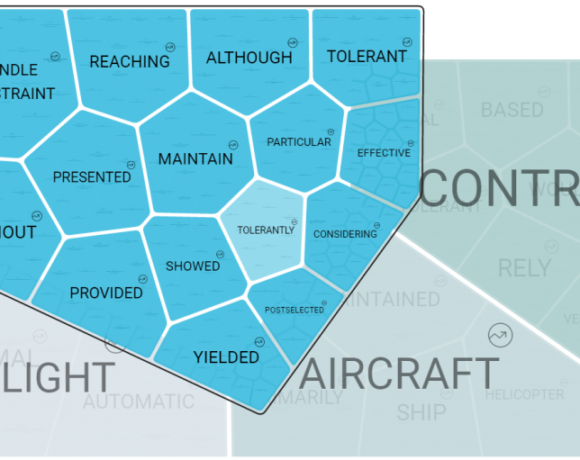

Description: In Iris AI we cluster documents based on topic models. The essence of the topic models is empirical representations of statistical distributions of topics in a document and of words in a topic. Based on these models, documents and words are clustered under topics. In order to communicate the document-clusters with humans, we need to systematically label these clusters.

Labeling these clusters with the corresponding word-clusters seems the most obvious approach. However, the aggregated words, sorted by their statistical properties in a topic, may at the same time carry encrypted information which is difficult to comprehend. In most situations, direct presentation to humans in this raw form of aggregated words is not optimal.

The goal of this project is to find a way to label clusters of documents with words based on their relevance or other classification criteria, such that the sequence of words in the label describes the cluster as closely as possible to a human annotation (sequence of tags).

Recent application of rotational unit of memory (RUM 1) has proven to be successful in summarizing texts. We will explore in this project the applicability of RUM, or other algorithms such as RNNs, in this project.

Tasks:

- Literature review of current algorithms on topic-models and document-cluster labeling.

- Create base-line for the document-cluster labeling using combinations of TfIdf, topic-modeling, and word-

embedding algorithms. - Develop alternative cluster-labeling algorithm with more complex algorithms such as RUM or RNN.

- Benchmark the algorithm in 3. against the baseline algorithm and the existing annotated data.

[1] Rumen Dangovski, Li Jing, Preslav Nakov, Mico Tatalovic, Marin Soljacic, Rotational Unit of Memory:A Novel Representation Unit for RNNs with Scalable Applications.