What Is the AI Context Layer, And Why It Changes Everything About Enterprise AI

The Reality of the AI Adoption Gap

Enterprises are spending more on AI than ever before, yet the outputs consistently disappoint business leaders. Organizations have rushed to build chatbots and internal tools, only to hit a wall of brittle workflows and a lack of contextual learning. According to research by MIT NANDA (2025), 95% of enterprise AI pilots currently deliver zero measurable ROI. This is not because the foundational models are weak. The constraint is a fundamental lack of proper data context, which costs enterprises an estimated $67.4B annually in hallucinations. This gap identifies the missing piece in the modern enterprise stack: the AI context layer.

Context vs. Guesswork: A Story of Two Queries

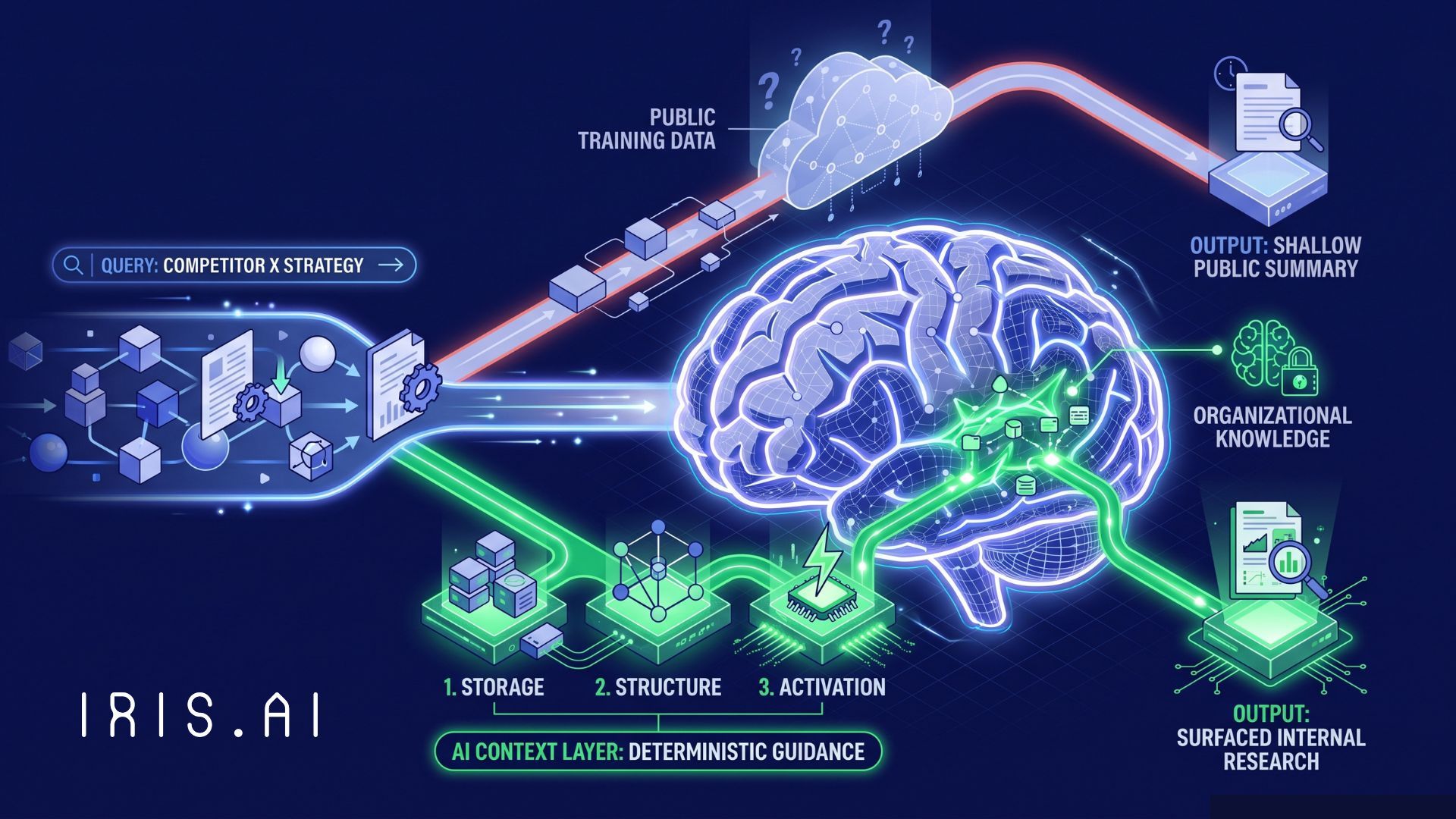

To understand the material difference this layer makes, consider a typical analyst query regarding competitive strategy. In a "before" scenario without a context layer, an analyst asks a generic AI about a competitor's market moves and receives a shallow, public-facing summary that any consumer could find. The model relies entirely on its parametric training data, which is often outdated or generic.

In the "after" scenario, that same query is processed through a dedicated context layer. This infrastructure instantly surfaces six months of internal, proprietary research and technical reports, allowing the model to reason from what your organization actually knows. Without this layer, the model is merely guessing. With it, every agent reads from a deterministic, expert-validated knowledge source. The context layer sits between raw enterprise data and model output to capture and deliver organizational knowledge at the exact point of inference. It is distinct from generic data management because it is purpose-built for AI reasoning rather than just reporting or storage.

The Three Phases of Infrastructure Evolution

The emergence of the context layer is the final step in a decade-long evolution of the enterprise AI stack:

- Phase One: Storage. In this era, organizations built massive data lakes and warehouses designed to capture and hold every byte of information.

- Phase Two: Structure. The rise of AI data foundations organized, cleaned, and governed that data so models could theoretically use it.

- Phase Three: Activation. This is the current phase where the context layer activates that substrate, delivering governed knowledge to AI systems at the moment of a query.

Foundations and the context layer work in tandem; the foundations act as the substrate, while the context layer activates that substrate for AI reasoning. Does your organization currently have the infrastructure to activate your data, or are you still just storing it?

Why Your Data Agents Need Context

As a16z recently noted in their analysis, Your Data Agents Need Context, data and analytics agents are essentially useless without the right context because they cannot decipher business definitions or tribal knowledge across disparate systems. A deceptively simple question like "What was revenue growth last quarter?" is often a challenge for an agent because the definition of "revenue" is rarely hard-coded into a warehouse. It is tribal knowledge that varies by team and historically contingent rules.

A true context layer must be a superset of traditional semantic layers. It must encompass canonical entities, identity resolution, and domain-specific regulatory logic. At Iris.ai, we have spent ten years building deep-tech that constructs this context from multimodal raw data, such as PDFs, engineering specifications, and technical images, which general-purpose layers cannot access.

Practical Implications for the Enterprise Stack

This shift in infrastructure has three practical implications for every enterprise AI buyer:

- Evaluation criteria must shift from benchmarking model speed to assessing context quality. You must measure how accurately your proprietary research and domain-specific terminology are represented before the AI provides an answer.

- Governance must move earlier in the stack to the point of knowledge construction. Whoever controls the context layer ultimately controls the quality, lineage, and compliance of the AI output.

- ROI conversations must change because the primary variable determining outcomes is the context you feed the system, not the model you choose. As foundation models commoditize, your proprietary knowledge infrastructure becomes your only sustainable structural advantage.

Securing a Structural Advantage

Organizations that invest in their context layer now will possess a compounding asset that scales across every new workload. By building the ontology, terminology, and source authority rules before generation, you provide your AI with a governed environment for reasoning rather than a pile of retrieved fragments. Iris.ai provides this context layer purpose-built for enterprise knowledge work, using Axion for data unification and Neuralith for governed agent orchestration. Build your knowledge layer once, and power every AI agent your team ships forever.

See How Iris.ai Builds the Context Layer That Makes Enterprise AI Reliable